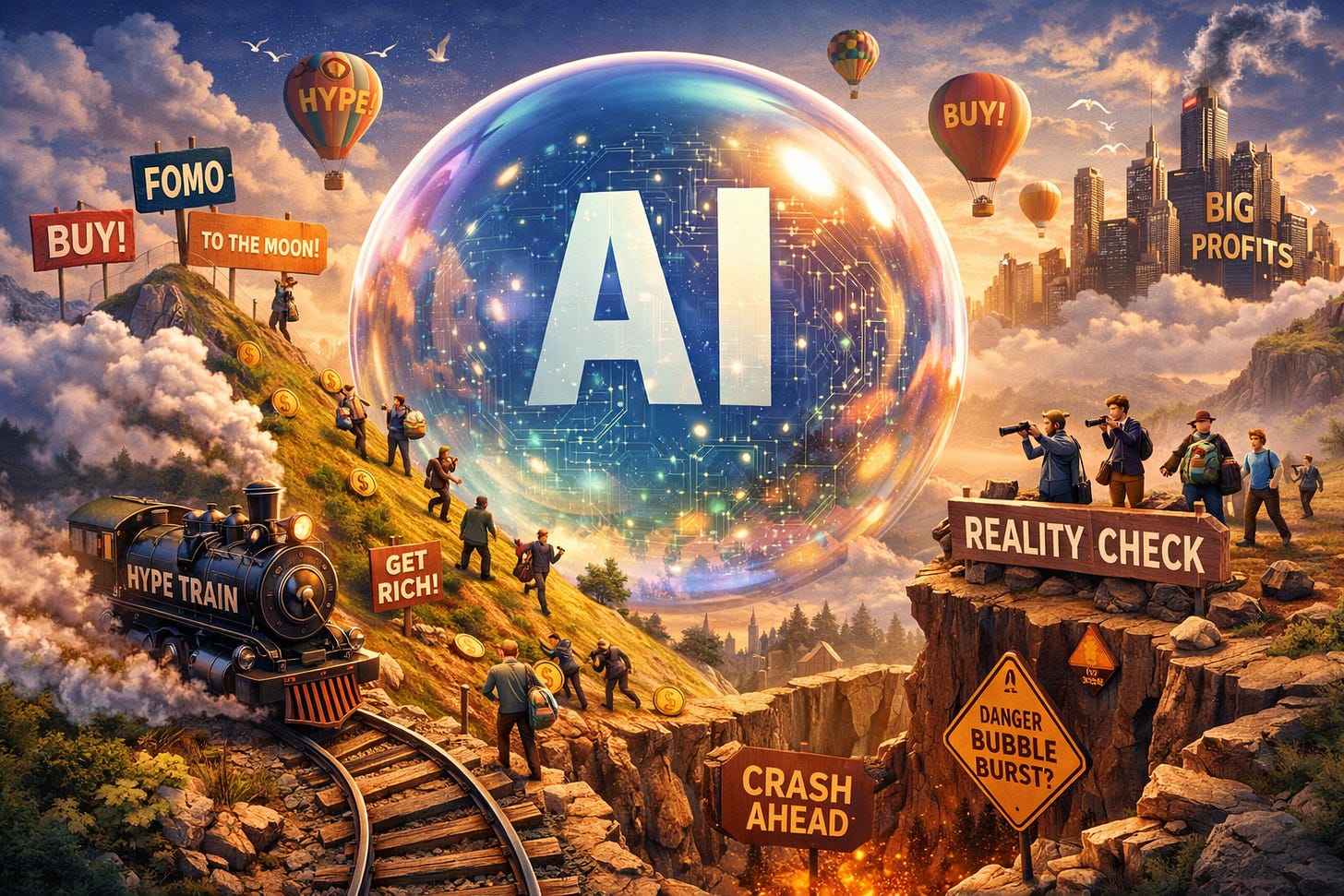

I like Goldman Sachs research. They tend to produce big bits of research with enough raw data for you to make your own decisions. If you wanted a single image to explain the market explosion higher, then these next three graphs would probably be it. In the first graph, it shows that GS thinks “agent” demand for tokens is going to grow demand 13 times more than current token demand by 2030.

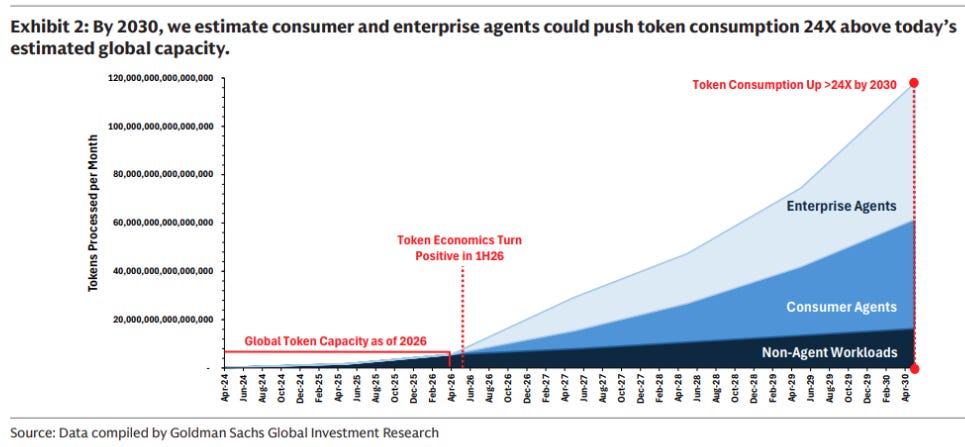

My guess is this GS Chart is an extrapolation from the sharp pick up in usage we have seen so far this year. This chart is taken from Openrouter.ai, and only shows volumes going through OpenRouter.

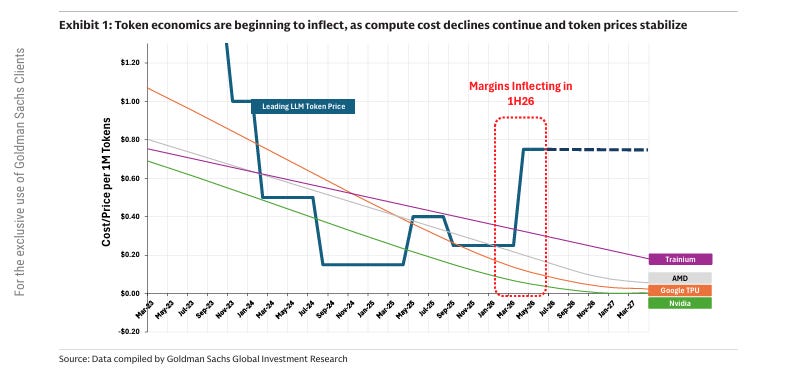

The below graph is showing an inflection in token pricing to be profitable. If correct, this should imply that the AI boom could be self financing, which would be bullish. Intriguingly, the OpenRouter data shows a dip in usage as prices rose - perhaps a sign that there is price sensitivity for AI users. I don’t have enough data to be sure of that conclusion, but something to watch.

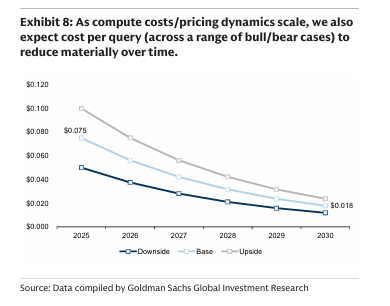

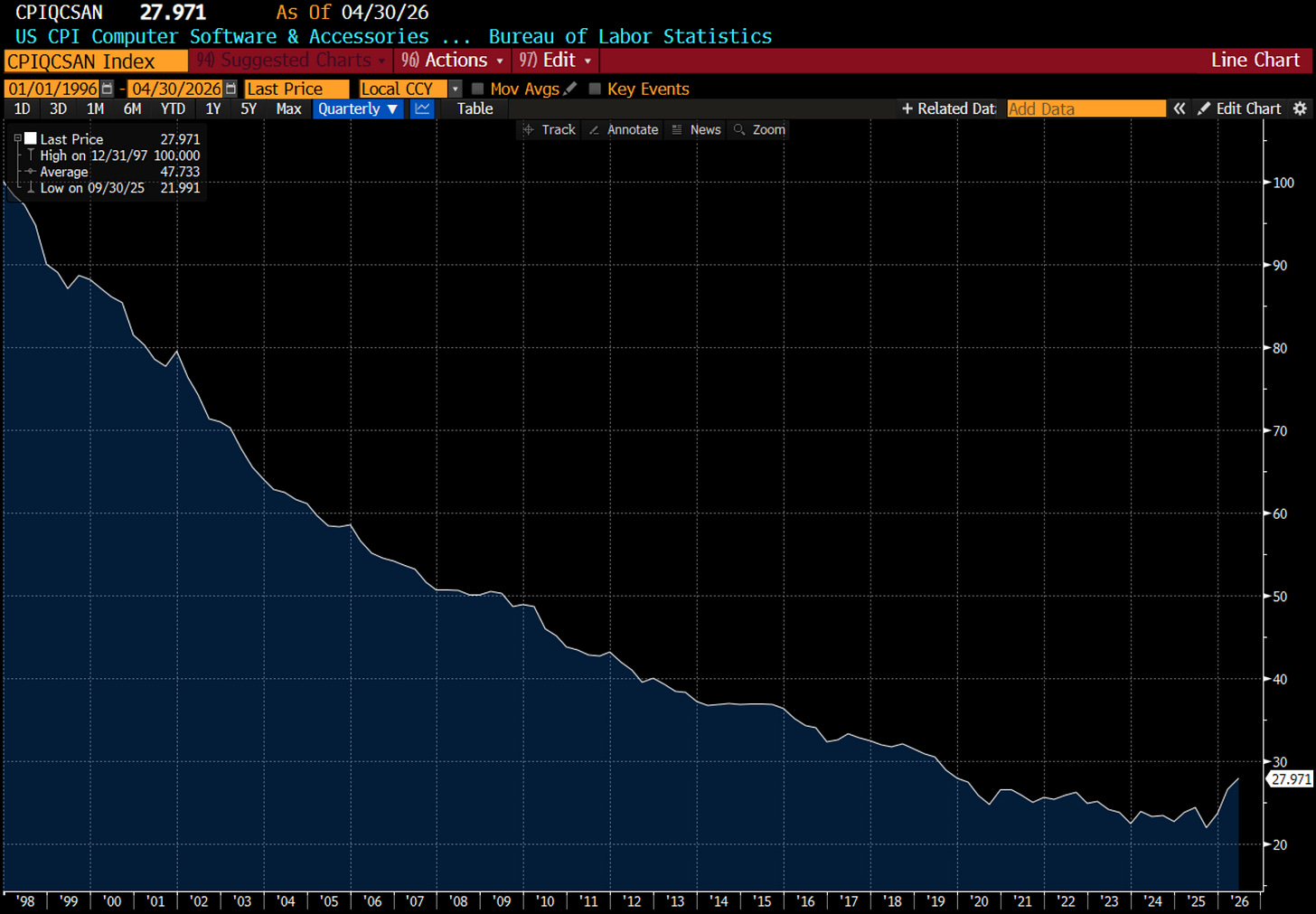

Despite this inflection in pricing, Goldman still sees pricing fall. If there is price sensitivity, then falling prices would be necessary for GS forecast volumes to be attained. So the rise in compute pricing is something to watch.

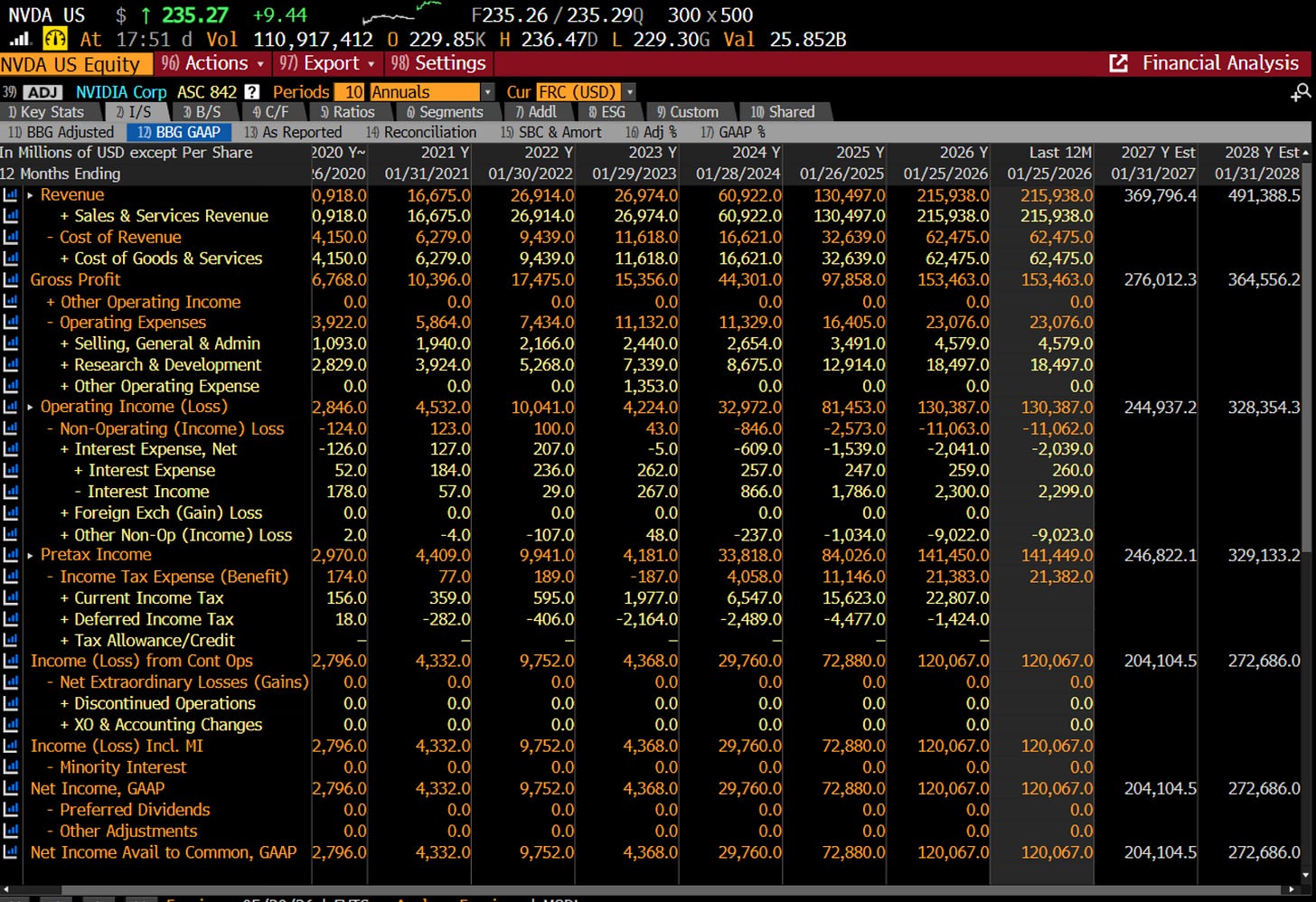

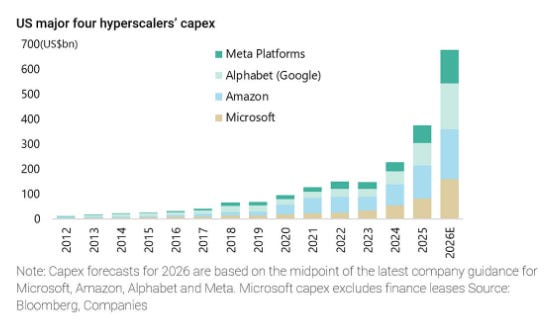

But do these numbers make sense? In 2030, GS estimate monthly consumption of 120,000 trillion tokens at a price of at USD 0.18 per 1m tokens, this would imply monthly spend on tokens would be USD22bn a month, or USD260 billion a year. For context, the world consumes around 100m barrels of oil a day, which at USD 100 a barrel, implies USD 10bn a day, and USD3.65 trillion a year. This implies AI spend would be around 8% of oil spend. I struggle with those numbers, as the amount of investment announce by the hyperscalers and AI labs feel like they are looking for a business that generates far more revenue than that. Nvidia, which produces the chips that basically makes AI possible is forecast to have USD 500bn of revenue in 2028. Spending USD700bn annually for an industry that will generate only USD260bn in revenue does not make sense.

Nvidia sales is basically an inverse of hyperscaler spending.

I think the key question in this analysis is whether prices for AI will keep falling or not. The AI boom is creating software inflation. Or in other words, rising cost pressures for users.

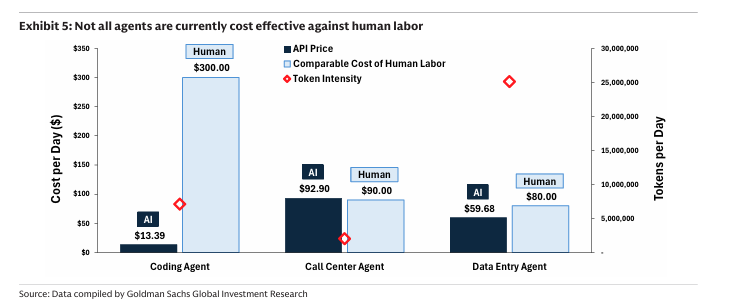

Again from the GS report, they estimate that a AI agent is much cheaper for coding, but already at parity for a call centre and data agent. When I talk to my friend who is building out an agent to replace call centre, the costs for AI seem well below call centre agents - so not sure if this data point is correct.

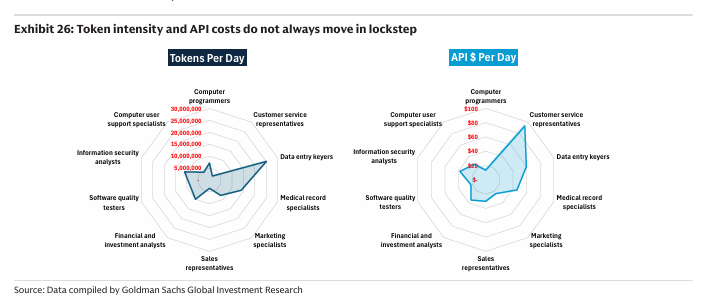

It is possible that start ups have been subsidized by the cloud giants to drive demand and adoption - so the costs that start ups see if below the actual costs. I think this is what this chart below is trying to say - that in essence token usage and API costs can be very different.

I think what I am seeing is that the AI boom is now pushing cost pressures through the AI and software sector. As the GS model shows, the general assumption is for ever lower prices - offset by higher volumes. But if the GS numbers on call centres and data entry operators are correct, rising prices should put a dent into adoption rates. That being said, I would not think hyperscalers cut spending on lower adoption - I think they cut capex spend if competitors like OpenAI start running out of money, and then cut capex. From the data I have seen - I think that is 50/50 proposition now. But that is a post for another time.